The Nervous System for the

Half-Trillion Dollar Brain

DePIN and the Missing Operating System

As $500 billion flows into AI infrastructure, centralized cloud giants hit a silicon and energy wall. The expansion of the Global Brain is being forced outward — and the missing piece is the operating system that ensures it becomes a foundation for freedom, not a bigger prison.

In 1869, the last spike was driven into the First Transcontinental Railroad at Promontory Summit, Utah. It was a moment that felt, to those who witnessed it, less like an engineering achievement and more like a metaphysical event — the two coasts of a continent suddenly pulled into a single nervous system. Capital flowed. People moved. Ideas propagated at a speed that would have been unrecognizable to the generation that preceded it. The world had not merely grown faster. It had changed its underlying architecture.

We are living through a comparable moment. The numbers are staggering, and they deserve to be stated plainly before we attempt to make sense of them.

In 2026, the five largest cloud and AI infrastructure providers — Amazon, Alphabet, Microsoft, Meta, and Oracle — will collectively spend between $660 billion and $690 billion on capital expenditure, the overwhelming majority directed at AI compute, data centers, and networking. This nearly doubles the already extraordinary levels of 2025. Amazon alone has committed $200 billion, mostly to data centers. Alphabet will spend between $175 billion and $185 billion. Microsoft is on track to deploy over $120 billion. Meta will invest between $115 billion and $135 billion. Add the Stargate project — a $500 billion joint initiative backed by OpenAI, SoftBank, Oracle, and the Trump administration, targeting full deployment by 2029 — and the scope of what is being constructed becomes almost hallucinatory. This is not a technology investment. This is a geological event in capital formation, more comparable to the construction of the interstate highway system or the electrification of the 20th-century world than to any software product cycle.

But here is where the story becomes genuinely interesting. The Global Brain — that vast, humming, distributed intelligence emerging from the world’s interconnected compute clusters — is hitting a wall. Not a metaphorical wall. A physical one, measured in watts and square kilometers and the finite capacity of aging electrical grids. And that collision between ambition and physical reality is forcing the most consequential architectural decision in the history of computing: the shift from centralized to decentralized infrastructure.

The question that follows is not whether this shift will happen. The economic logic makes it inevitable. The question is what happens to all that raw infrastructure once it exists. Who coordinates it? Who ensures that half a trillion dollars of distributed compute becomes something more than a fragmented collection of hardware nodes, humming in server rooms from Lagos to Ljubljana, owned by nobody in particular and serving no coherent purpose?

That question is the one this article intends to answer.

The Silicon Wall

There is a detail buried in Microsoft’s most recent earnings disclosures that deserves far more attention than it has received. The company disclosed an $80 billion backlog of Azure orders that cannot be fulfilled — not because Microsoft lacks customers, not because the software is broken, but because there is not enough power to run the servers. Demand has outpaced the physical world’s ability to generate and deliver electricity.

This is not an isolated problem. PJM Interconnection, the largest grid operator in the United States — serving over 65 million people across 13 states — projects it will be short six gigawatts of its reliability requirements by 2027. Northern Virginia, which hosts the world’s densest concentration of data center capacity, has halted new permits in several counties until power infrastructure catches up. Virginia grid operators have issued formal capacity warnings stretching through 2028.

The physics are unforgiving. The power draw of individual server racks has surged from 10 to 14 kilowatts five years ago to over 100 kilowatts today — a tenfold increase that requires fundamental redesigns of electrical distribution, cooling systems, and building infrastructure. Global data center electricity consumption is projected to hit 1,100 terawatt-hours in 2026 — equivalent to Japan’s entire national consumption. Residential electricity prices in the United States are forecast to rise another 4% on average in 2026, after increasing 5% in 2025, a direct and measurable cost borne by ordinary households to subsidize the infrastructure ambitions of a handful of corporations.

The major cloud providers are responding with increasingly extreme measures. Microsoft signed a 2-gigawatt nuclear commitment with Constellation Energy through 2040 — the largest corporate nuclear agreement in history. Meta is investing $3.2 billion in a 2-gigawatt combined-cycle gas plant in Louisiana specifically to power its Hyperion data center campus. Oracle is funding Stargate campuses using on-site natural gas to circumvent grid dependency entirely. These are not software companies hedging their bets on energy policy. These are software companies becoming energy companies because they have no other choice.

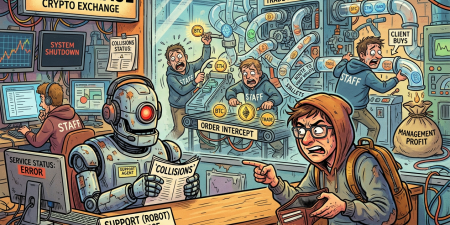

![]() The saturation point in pictures: the centralized model is encountering physical limits that no amount of capital can resolve. A tiny bird, unbothered, observes from the top of the cracking building.

The saturation point in pictures: the centralized model is encountering physical limits that no amount of capital can resolve. A tiny bird, unbothered, observes from the top of the cracking building.

By the end of 2025, 241 gigawatts of data center electricity capacity sat in the development pipeline — a 159% increase from the beginning of that year. But only one-third of those projects were under active development. The rest are stalled, waiting for grid capacity that utilities, which have not needed to rapidly expand electricity generation in decades, are struggling to provide. Wood Mackenzie analyst Ben Hertz-Shargel put it plainly: utilities just do not have either the grid capacity or the generating capacity to build it fast enough to accommodate these new large energy demand centers. The capex growth of major data center developers is expected to decelerate for the first time since 2023, matching only 58% of the prior year’s growth rate despite Big Tech’s public commitments to double spending.

There is a word for what is happening here. It is saturation. The centralized model of AI infrastructure — the model that built the internet’s backbone, that gave us cloud computing, that made Amazon Web Services the most profitable division of the world’s largest retailer — is approaching the physical limits of what centralization can achieve. The empire of the hyperscalers is not collapsing. But it is encountering, for the first time, a constraint that money alone cannot resolve.

And so capital is looking outward. Toward the edges. Toward the distributed architecture that decentralization has always promised, and that economic necessity is now making inevitable.

The DePIN Revolution: Infrastructure Escaping the Center

Decentralized Physical Infrastructure Networks — DePIN, in the shorthand that has colonized the vocabulary of crypto-adjacent technology — are not a new idea. The concept has been circulating in various forms since the early days of blockchain. But the gap between concept and operational reality has, until very recently, been vast. What has changed is not the idea. What has changed is the numbers behind it.

As of early 2026, more than 650 active DePIN projects are operational, collectively coordinating over 41.8 million devices worldwide. The sector’s combined market capitalization stands at approximately $9 to $10 billion, having surpassed the oracle token category — a meaningful signal about where the crypto ecosystem believes value is migrating. On-chain revenue from DePIN protocols reached approximately $150 million in January 2026 alone, representing an 800% year-over-year increase for leading networks. According to Messari’s State of DePIN 2025 report, the sector generated approximately $72 million in full-year 2025 on-chain revenue — genuine payments from genuine customers for genuine compute services, not speculative token appreciation. The World Economic Forum projects DePIN market capitalization reaching $3.5 trillion by 2028.

Look at the individual projects and the numbers become concrete rather than abstract. Aethir, a decentralized compute network, reported $166 million in annualized revenue in Q3 2025, serving over 150 enterprise clients through a network of 430,000 GPUs across 94 countries. Its GPU utilization rate runs at 95% or higher — compared to an industry average of 15 to 30% for traditional cloud providers, where capacity is systematically overprovisioned to guarantee availability. Akash Network offers H100 GPU access at $1.20 to $1.80 per hour, compared to AWS pricing of $4.50 to $5.50 per hour — a 60 to 75% cost reduction for workloads that can tolerate the reliability trade-offs inherent in distributed infrastructure. Render Network has processed over 63 million frames for creative AI applications. Helium has deployed 115,000 wireless hotspots across partnerships with T-Mobile, AT&T, and Telefonica, building a community-owned wireless network that operates at a fraction of the cost of traditional carrier infrastructure. Hivemapper has mapped 29% of the world’s roads in two years, using dashcam-equipped vehicles whose owners are compensated in network tokens — a model that accomplished in 24 months what national mapping programs have failed to accomplish in decades.

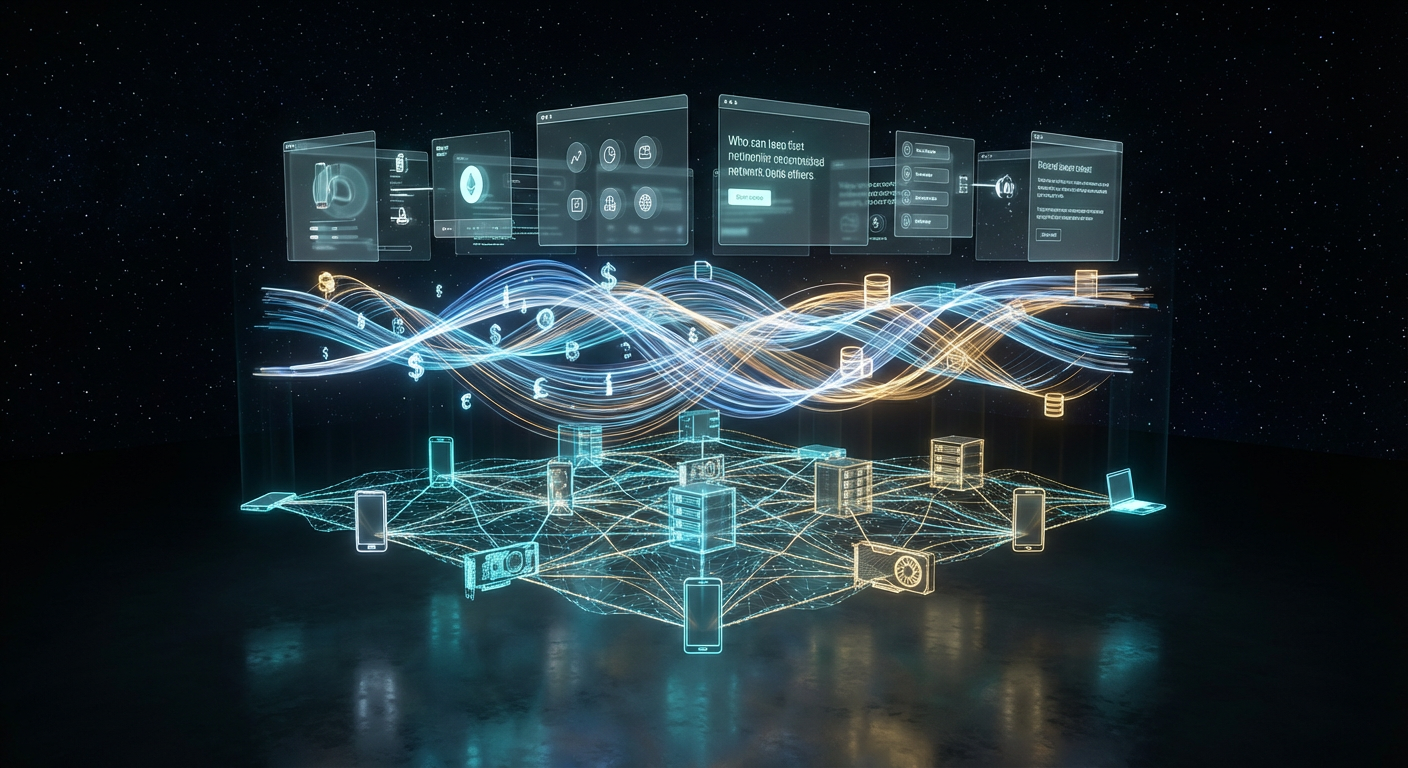

Outside the boardroom window: a suburban garage with a small satellite dish and GPU rack, happily blinking green — one of 41.8 million distributed nodes that are rewriting the infrastructure map.

Outside the boardroom window: a suburban garage with a small satellite dish and GPU rack, happily blinking green — one of 41.8 million distributed nodes that are rewriting the infrastructure map.

The cost argument is, at this point, empirically established. Decentralized providers consistently deliver compute, storage, and bandwidth at 50 to 85% below hyperscaler pricing. Storj delivers cloud storage at 80% below AWS S3 rates. The Render Network offers GPU rendering at up to 85% savings compared to AWS and Google Cloud. These are not marketing figures drawn from best-case scenarios. They are the natural consequence of a fundamental economic reality: decentralized networks tap into existing hardware that would otherwise sit idle, eliminating the hyperscaler’s principal cost structure — land, construction, power infrastructure, and the organizational overhead of running hyperscale facilities at a utilization rate of 15 to 30%.

Venture capital has noticed. DePIN startups raised approximately $744 million across 165 documented deals between January 2024 and July 2025, with an additional 89 undisclosed transactions. Borderless Capital deployed a $100 million DePIN Fund III in September 2024. Entree Capital committed $300 million in December 2025. The institutional money is moving, and it is moving in the direction that economic gravity always flows: toward the model that accomplishes more with less.

The Coordination Problem: Why Raw Hardware Is Not Enough

But here is where the analysis must pause, and ask a harder question. The history of infrastructure revolutions is not simply a story of physical assets being built. It is a story of the organizational and software layers that made those physical assets coherent and usable.

The transcontinental railroad was not merely steel rails and wooden ties. It required a unified gauge standard — the decision, eventually codified, that all tracks would be built to the same width so that trains could move across the entire network without being unloaded and reloaded at every junction. It required standardized timekeeping, which is why the United States adopted time zones in 1883 — not because anyone was philosophically interested in time, but because a distributed rail network required every stationmaster to be operating from a common temporal framework. The physical infrastructure was necessary but not sufficient. The coordination layer was what made it a network rather than a collection of disconnected tracks.

The electrical grid required the same kind of standardization. Edison’s direct current and Tesla’s alternating current were not merely competing technologies. They were competing coordination philosophies. The resolution of that conflict — the adoption of AC as the universal standard — made it possible to build a single, interconnected grid rather than thousands of isolated local power systems.

DePIN is building its rails. But the rails, by themselves, do not constitute a network. A network requires something more: the software layer that establishes what runs on the rails, how it is priced, how it is secured, how it is accessed by the end user who neither knows nor cares about the underlying infrastructure, and how the humans and AI agents who operate within that environment experience a coherent, functional world rather than a cacophony of incompatible protocols.

This is the coordination problem that half a trillion dollars of AI infrastructure investment has created, and that no existing solution has resolved.

The Missing Operating System

Consider what it means, concretely, to use decentralized infrastructure today. A researcher who wants to train a model on Akash Network must navigate token acquisition, workload specification in a custom domain-specific language, bidding on compute providers, monitoring job execution across nodes, and managing payment in a native cryptocurrency. A developer who wants to build a graphical application that runs on decentralized compute faces an even steeper climb: there is no standard UI layer, no standard authentication system, no standard economic primitives for billing and settlement. The decentralized infrastructure exists, but the experience of using it resembles the early internet before HTTP — powerful in potential, impenetrable in practice.

This is not a criticism of the individual projects. Akash, Render, Filecoin, Bittensor — these are genuine technical achievements. The problem is that they are components looking for a system. GPUs without a scheduler. Storage without a filesystem. Compute without an operating system in the meaningful sense: a unified layer that abstracts the complexity of the underlying hardware and presents the user — whether that user is a human developer, an enterprise customer, or an AI agent — with a coherent environment in which to operate.

The history of computing tells us exactly what happens when this layer exists versus when it does not. Before operating systems, computers were programmed by directly manipulating hardware — toggle switches, punch cards, bare metal. The introduction of operating systems — first batch processing systems, then time-sharing systems, then the Unix philosophy, then graphical operating systems — did not merely make computers easier to use. It made computers useful to people who were not computer engineers. It converted specialized machinery into general-purpose infrastructure.

The same dynamic is playing out at civilizational scale in the DePIN ecosystem. The hardware is being built. The compute is coming online. The question is: what is the operating system that converts this hardware from a collection of independent nodes into a coherent, programmable, usable environment?

GRIDNET OS is attempting to be that layer — and it is the first project in the world to attempt it in its full scope. It occupies a position in the decentralized infrastructure stack that has no precise precedent, because the infrastructure stack it addresses has no precise precedent. It is not a blockchain. It is not a cloud provider. It is not a protocol for a single service category. It is what its name implies: an operating system for a decentralized world.

The monopoly guy, the oracle of finance, and the social media emperor walk into a boardroom. Nobody’s laughing. Decentralization doesn’t need their permission.

The monopoly guy, the oracle of finance, and the social media emperor walk into a boardroom. Nobody’s laughing. Decentralization doesn’t need their permission.

The scope of what a genuine decentralized operating system must accomplish is worth spelling out, because the gap between “decentralized compute exists” and “decentralized compute is usable by the humans and AI systems that need it” is wider than most infrastructure discussions acknowledge. It must handle data exchange between heterogeneous nodes — not merely storage, but the movement of structured and unstructured data across a network where nodes appear and disappear, where latency is variable, where trust between participants is mediated by cryptographic proof rather than organizational hierarchy. It must handle value transfers — a built-in economic layer that allows compute providers to be paid and compute consumers to pay, in real time, without the transaction costs and counterparty risks that plague current token-based payment systems. It must handle decentralized storage in a way that makes data useful for the AI agents and human applications that depend on it — not merely replicated across nodes, but organized, indexed, and retrievable. And it must handle the graphical application layer — the user interface that makes this infrastructure accessible to human beings who are neither cryptographers nor systems engineers.

This last point is more radical than it might appear. The DePIN ecosystem, like most of the crypto ecosystem, has historically been built for technical users by technical users. The graphical interface layer — the thing that made Windows and macOS and iOS the actual adoption events in computing history — has been almost entirely absent from decentralized infrastructure. An operating system that spans the full stack, from GPU cycles at the bottom to the graphical interface that a human sees at the top, changes the fundamental nature of what is possible. The same decentralized environment that serves AI compute workloads can serve the human beings who commission, monitor, and act upon those workloads. External AI computational engines and decentralized AI agentic networks become participants within this environment, not controllers of it. Full-stack coherence is not merely a feature. It is the difference between a powerful but unusable technology and a technology that can actually be adopted at civilizational scale.

The result is not merely a cheaper version of AWS. It is a different kind of thing entirely: an environment in which the AI agents and the human beings who interact with them operate in a system that is, by its architecture, resistant to capture, censorship, and the consolidation of power in any single entity’s hands.

The Civilizational Stakes

There is a tendency, in discussions of infrastructure, to focus on the economic dimension — the cost savings, the market projections, the venture capital allocations. These are real and important. But they are not the full story, and focusing on them exclusively misses what is actually at stake in this moment.

The AI systems being built on the infrastructure described in this article will, within the decade, touch nearly every significant decision made in human societies. Medical diagnoses. Financial allocations. Legal determinations. Educational content. Political discourse. The distribution of attention, opportunity, and resources across human populations. The question of who controls the physical infrastructure on which these systems run is not merely a question of market economics. It is a question of power — of who shapes the conditions under which intelligence, artificial and human, operates in the world.

The centralized model concentrates that power in a small number of organizations — currently five companies spending $660 to $690 billion per year to ensure that the physical substrate of AI intelligence runs through servers they own and control. This is not, in itself, evidence of malice. The hyperscalers have built extraordinary things and will continue to do so. But the concentration of physical AI infrastructure in a small number of hands creates a single point of political and economic leverage that history suggests will eventually be applied — whether through regulatory capture, economic coercion, or the simple organizational tendency of large institutions to serve their own interests over those of their users.

Consider what Microsoft’s $80 billion unfulfillable Azure backlog actually represents. It is not merely a supply chain problem. It is a structural gate: the organizations that can access frontier AI compute are those that Microsoft decides to serve, at prices Microsoft sets, under terms of service Microsoft drafts, subject to legal jurisdictions in which Microsoft operates. The entire edifice of the AI economy rests on decisions made in a small number of boardrooms, in a small number of countries, by a small number of people. This has always been true of physical infrastructure to some degree — railroads were monopolies, utilities were regulated cartels — but never before has the concentration been so extreme relative to the importance of the underlying resource.

Decentralization, properly implemented, is structurally resistant to this kind of capture. A network in which the physical infrastructure is owned by millions of independent participants, coordinated by cryptographic protocol rather than organizational hierarchy, cannot be switched off by a regulatory order or a board vote. It cannot be throttled at the convenience of a dominant player. It cannot be leveraged as a tool of political coercion in the way that cloud infrastructure — subject to terms of service, data residency requirements, and the enormous soft power of the companies that control it — inevitably can be.

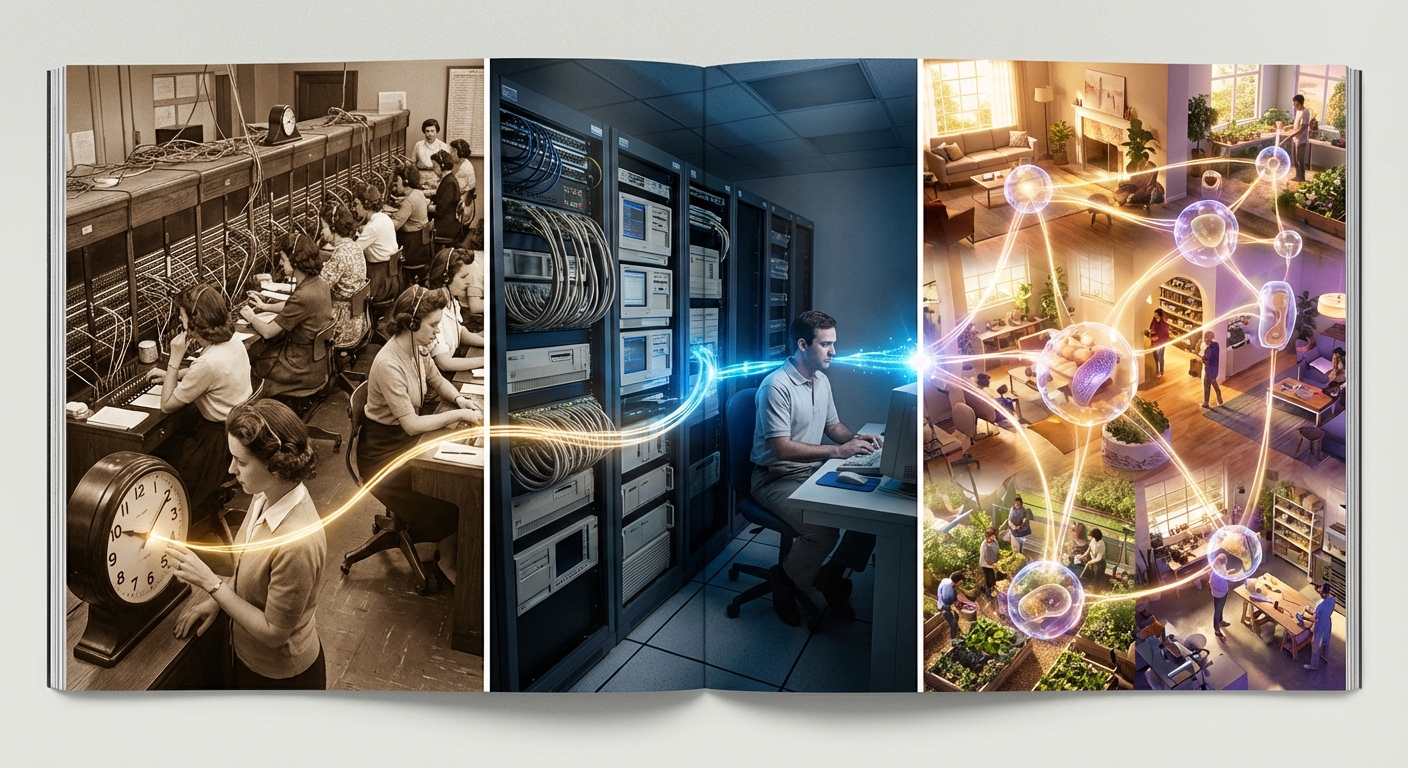

The Inevitable Architecture

In the early 1990s, the internet was a niche technology used by academics, defense contractors, and a small number of enthusiasts who were willing to navigate its considerable usability barriers. The transition to mass adoption did not happen because the underlying technology changed dramatically. The TCP/IP protocols that power the modern internet are essentially the same ones that existed in 1993. What changed was the addition of the World Wide Web — a coordination layer, built on top of the existing infrastructure, that made it possible for people who were not computer scientists to navigate, publish, and interact within the network.

The transition did not happen slowly. It happened with the speed and force of any change whose time has arrived — the time when the underlying infrastructure is mature enough, the coordination layer is coherent enough, and the economic incentives are aligned well enough for adoption to become self-reinforcing. From the launch of Mosaic in 1993 to 50% internet penetration in the United States took approximately eight years. The transition was, in retrospect, both faster than pessimists expected and more consequential than optimists had imagined.

The DePIN ecosystem is at an earlier stage than the internet was in 1993, but the structural conditions are more analogous than they might appear. The underlying infrastructure exists and is operational. The economic incentives — driven by the energy wall, the silicon constraints, and the cost differential between centralized and decentralized compute — are now strongly aligned with adoption. What is missing is the coordination layer that makes the infrastructure usable by the full range of participants who will need to use it: enterprise AI operators, independent developers, AI agents operating autonomously, and the human beings who will ultimately interact with the products and services these systems produce.

Half a trillion dollars is flowing into AI infrastructure in 2026. The silicon and energy constraints of centralized clouds are not temporary problems that will be solved with more spending — they are physical limits that will persist and intensify as AI compute demand continues to grow exponentially. The economic logic of decentralized infrastructure is, at this point, established empirically rather than theoretically. The hardware is being built. The nodes are coming online. The 41.8 million devices already participating in DePIN networks are the early signal of a distribution that will, within this decade, encompass hundreds of millions.

What those hundreds of millions of devices need is a nervous system — a coherent software layer that transforms their raw capacity into something intelligible, useful, and resistant to capture. The transcontinental railroad needed standard gauge. The electrical grid needed alternating current. The internet needed TCP/IP and then HTTP. Decentralized AI infrastructure needs an operating system that can do for distributed compute what all of those coordination layers did for their respective infrastructure revolutions: convert a collection of powerful but disconnected components into a network whose value exceeds the sum of its parts by an order of magnitude.

The $690 billion being poured into AI infrastructure in 2026 will build an extraordinary amount of hardware. The question that will determine whether that hardware becomes a foundation for genuinely distributed, autonomous digital life — or simply a more expensive and geographically dispersed version of the centralized systems that preceded it — is the question of what operating system runs on top of it.

That question does not yet have a settled answer. But the answer is coming, with the same inevitability that characterized every previous infrastructure revolution. The rails are being laid. The gauge standard is being negotiated. The coordination layer is being built. And when it arrives — when the nervous system finds its brain — the Global Brain will not merely be larger. It will be, for the first time, genuinely free.

GRIDNET Magazine covers the intersection of decentralized technology, AI infrastructure, and the emerging architecture of digital autonomy. GRIDNET OS is a project of the GRIDNET ecosystem. For technical documentation and ecosystem updates, visit gridnet.org.